TL;DR: Most marketers think llms.txt is for SEO – it’s not. It’s for making your content cheaper and easier for AI tools to process, reducing token costs and friction for the thousands of AI-powered products your customers actually use.

I see the discussion about llms.txt pop up all the time among digital marketers, and I think many are a bit confused about its purpose and potential. So let’s shed some light on it:

What is llms.txt?

llms.txt is a proposal designed by Jeremy Howard, an AI researcher, not a digital marketer! Let’s have a look at the definition:

A proposal to standardise on using an /llms.txt file to provide information to help LLMs use a website at inference time.

The key insight: Converting complex HTML pages with navigation, ads, and JavaScript into LLM-friendly plain text is both difficult and imprecise.

The Common Misconception

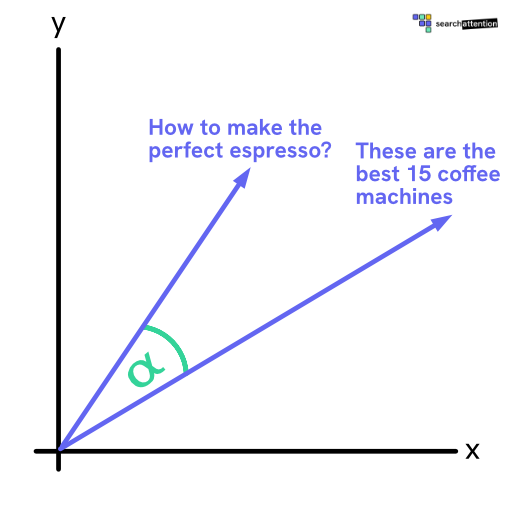

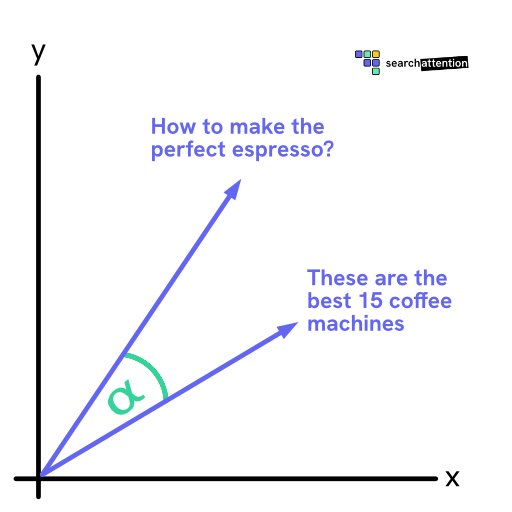

As you can see, the standard is talking about how websites can provide a format that is simple to read for an LLM. It is NOT talking about improving visibility in Google AI Overviews or Perplexity!

Let’s be frank: Google, Perplexity and ChatGPT don’t need llms.txt to index your page and remove all the boilerplate (ads, cookie banners, etc.). They already have sophisticated pipelines in place to do exactly that.

The REAL Marketing Opportunity

So why do I think marketers are missing a huge potential?

If you step back a bit from the AI search engines, you will most certainly have noticed that every single product on the market now advertises having AI integrated.

These tool providers usually don’t have their own search index. Certainly, they could use the search functionality of the LLM provider and/or use a proper scraper to read your website, but that is expensive for the company (e.g. $14/1k searches on Gemini, $10/1k searches on Anthropic) and is also often not useful when you want to access a specific page.

Key Benefits for Marketers

- Reduced friction: Not providing an llms.txt makes it more difficult and expensive for LLMs used in AI tools to read and reason about your page!

- Better performance: A cleaned up markdown (without ads, header/footer, cookie banner, newsletter signup) has a significantly lower token count, making the LLM answer faster and more accurate about your content

- Cost efficiency: Reduces processing costs for AI tools integrating your content

Smart Companies Are Already Doing This

That’s why many SaaS companies have started to add a ‘copy to markdown’ button to their website, which makes it very easy to copy/paste content into whatever AI tool the user is currently using.

Bottom Line for Marketers

llms.txt isn’t about SEO or search rankings – it’s about making your content more accessible and cost-effective for the growing ecosystem of AI-powered tools that your customers are using every day. By implementing it, you’re reducing friction for AI integration and positioning your content for the future of how people interact with information.